[ad_1]

Go to our Rockset Group to evaluate earlier Workplace Hours or to see what’s developing.

Throughout our Workplace Hours a number of weeks in the past, Tyler and I went over what are SQL transformations and real-time rollups, find out how to apply them, and the way they have an effect on your question efficiency and index storage measurement. Beneath, we’ll cowl among the highlights.

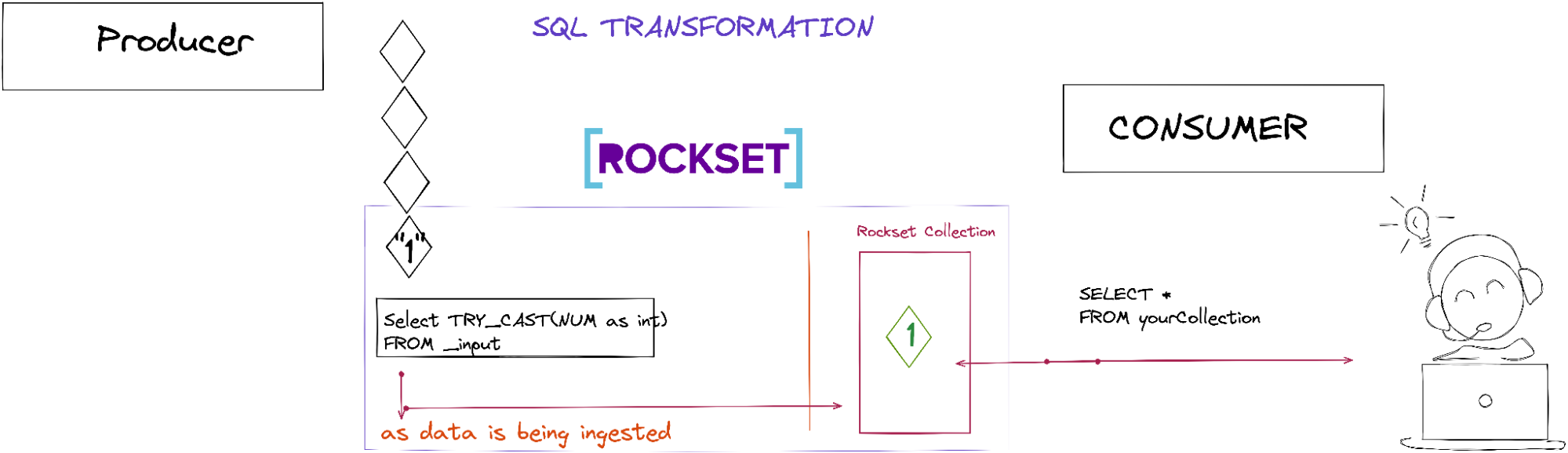

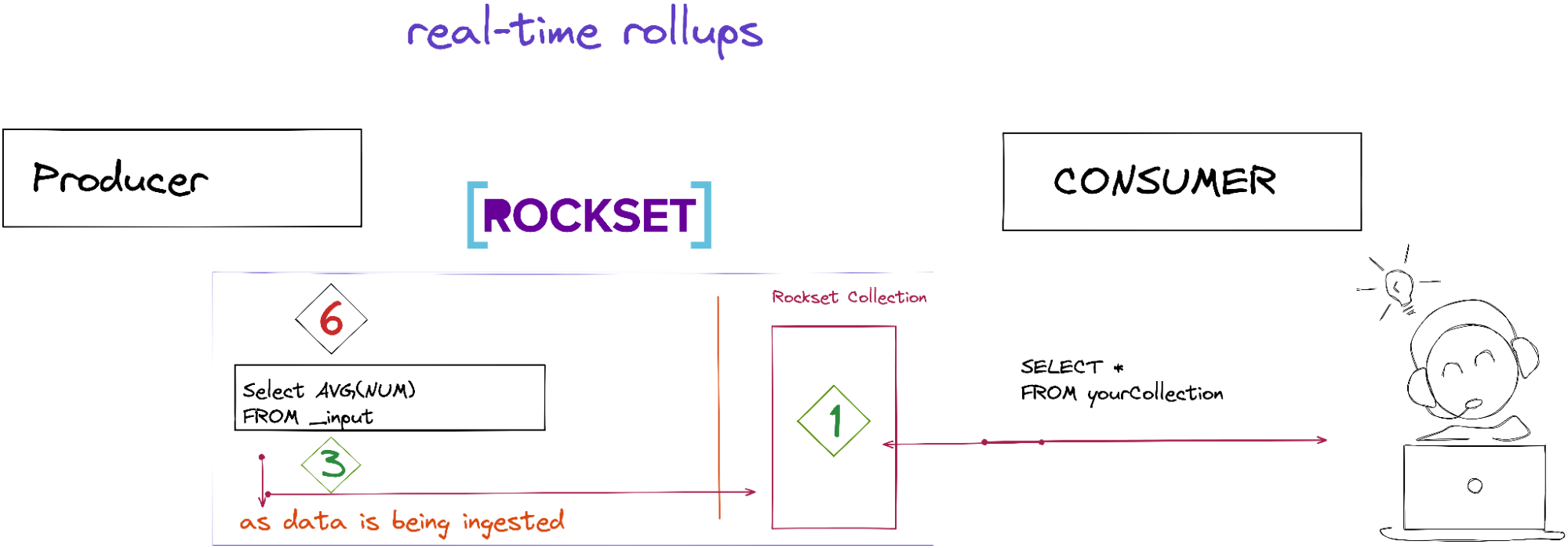

SQL transformations and real-time rollups happen at ingestion time earlier than the Rockset assortment is populated with information. Right here’s the diagram I did throughout Rockset Workplace Hours.

Tyler demonstrated how question efficiency and storage are impacted whenever you use SQL transformations and real-time rollups with three totally different queries. Beneath, I’ll describe how we constructed the gathering and what we’re doing within the queries.

Preliminary Question With no SQL Transformations or Rollups Utilized

On this question, we’re constructing a time-series object that grabs probably the most lively tweeters inside the final day. There aren’t any SQL transformations or rollups, so the gathering accommodates simply the uncooked information.

-- Preliminary question towards the plain assortment 1day: 12sec

with _data as (

SELECT

rely(*) tweets,

forged(DATE_TRUNC('HOUR',PARSE_TIMESTAMP('%a %h %d %H:%M:%S %z %Y', t.created_at)) as string) as event_date_hour,

t.person.id,

arbitrary(t.person.identify) identify

FROM

officehours."twitter-firehose" t trace(access_path=column_scan)

the place

t.person.id shouldn't be null

and t.person.id shouldn't be undefined

and PARSE_TIMESTAMP('%a %h %d %H:%M:%S %z %Y', t.created_at) > CURRENT_TIMESTAMP() - DAYS(1)

group by

t.person.id,

event_date_hour

order by

event_date_hour desc

),

_intermediate as (

choose

array_agg(event_date_hour) _keys,

array_agg(tweets) _values,

id,

arbitrary(identify) identify

from

_data

group by

_data.id

)

choose

object(_keys, _values) as timeseries,

id,

identify

from

_intermediate

order by size(_keys) desc

restrict 100

Supply: GitHub gist

- On line 4 we’re counting the overall tweets

- On line 7 we’re pulling the ARBITRARY for

t.person.identify— you possibly can learn extra about ARBITRARY - On traces 15 and 16 we’re doing aggregations on

t.person.idandevent_date_hour - On line 5 we create the

event_date_hourby doing a CAST - On line 11-12 we filter person.id that isn’t null or undefined

- On line 13 we get the most recent tweeters from the final day

- On traces 14-16 we do a GROUP BY with

t.person.idandevent_date_hour - On traces 20-37 we construct our time collection object

- On line 38 we return the highest 100 tweeters

This inefficient contrived question was run on dwell information with a medium VI and took about 7 seconds to execute.

Second Question With SQL Transformation Utilized Solely

Within the second question, we utilized SQL transformations after we created the gathering.

SELECT

*

, forged(DATE_TRUNC('HOUR', PARSE_TIMESTAMP('%a %h %d %H:%M:%S %z %Y', i.created_at)) as string) as event_date_hour

, PARSE_TIMESTAMP('%a %h %d %H:%M:%S %z %Y', i.created_at) as _event_time

, forged(i.id as string) as id

FROM

_input i

the place

i.person.id shouldn't be null

and that i.person.id shouldn't be undefined

Supply: GitHub gist

- On line 3, we create an

event_date_hour - On line 4, we create an

event_time - On line 5, we create an id as a string

- On traces 9 and 10, we choose

person.idthat isn’t null or undefined

After we apply the transformations, our SQL question seems to be extra simplified than the preliminary question:

with _data as (

SELECT

rely(*) tweets,

event_date_hour,

t.person.id,

arbitrary(t.person.identify) identify

FROM

officehours."twitter-firehose_sqlTransformation" t trace(access_path=column_scan)

the place

_event_time > CURRENT_TIMESTAMP() - DAYS(1)

group by

t.person.id,

event_date_hour

order by

event_date_hour desc

),

_intermediate as (

choose

array_agg(event_date_hour) _keys,

array_agg(tweets) _values,

id,

arbitrary(identify) identify

from

_data

group by

_data.id

)

choose

object(_keys, _values) as timeseries,

id,

identify

from

_intermediate

order by size(_keys) desc

restrict 100

Supply: GitHub gist

- On line 3, we’re counting the overall tweets

- On line 6 we’re pulling the ARBITRARY for

t.person.identify - On line 10, the filter is now on the timestamp

- On traces 11-13 we nonetheless do a GROUP BY with

t.person.idandevent_date_hour - On traces 17-34 we nonetheless create our time-series object

Principally, we excluded no matter we utilized throughout SQL transformations within the question itself. After we run the question, the storage index measurement doesn’t change an excessive amount of, however the question efficiency goes from seven seconds to 3 seconds or so. By doing SQL transformations, we save on compute, and it exhibits — the question performs a lot quicker.

Third Question With SQL Transformation and Rollups Utilized

Within the third question we carried out SQL transformations and rollups after we created the gathering.

SELECT

rely(*) tweets,

forged(DATE_TRUNC('HOUR', PARSE_TIMESTAMP('%a %h %d %H:%M:%S %z %Y', i.created_at)) as string) as event_date_hour_str,

DATE_TRUNC('HOUR', PARSE_TIMESTAMP('%a %h %d %H:%M:%S %z %Y', i.created_at)) as event_date_hour,

forged(i.person.id as string) id,

arbitrary(i.person.identify) identify

FROM

_input i

the place

i.person.id shouldn't be null

and that i.person.id shouldn't be undefined

group by

i.person.id,

event_date_hour_str,

event_date_hour

Supply: GitHub gist

Along with what we did utilized earlier for the SQL transformations, we’re now making use of rollups as effectively.

- On line 2, we’re counting all of the tweets

- On line 6 we’re pulling the ARBITRARY

- On traces 12-15 we’re making use of the GROUP_BY

So now, our last SQL question seems to be like this:

with _data as (

SELECT

tweets,

event_date_hour_str,

event_date_hour,

id,

identify

FROM

officehours."twitter-firehose-rollup" t trace(access_path=column_scan)

the place

t.event_date_hour > CURRENT_TIMESTAMP() - DAYS(1)

order by

event_date_hour desc

),

_intermediate as (

choose

array_agg(event_date_hour_str) _keys,

array_agg(tweets) _values,

id,

arbitrary(identify) identify

from

_data

group by

_data.id

)

choose

object(_keys, _values) as timeseries,

id,

identify

from

_intermediate

order by size(_keys) desc

Restrict 100

Supply: GitHub gist

After we apply the SQL transformations with the rollups, our question goes from a womping seven seconds to 2 seconds. Additionally, our storage index measurement goes from 250 GiB to 11 GiB now!

Benefits/Issues for SQL Transformations and Actual-Time Rollups

SQL Transformations

Benefits:

- Improves question efficiency

- Can drop and masks fields at ingestion time

- Enhance compute price

Consideration:

- Have to know what your information seems to be like

Actual-Time Rollups

Benefits:

- Improves question efficiency and storage index measurement

- Information is up to date inside the second

- Don’t want to fret about out-of-order arrivals

- Precisely-once semantics

- Enhance compute price

Issues:

- Information decision — You’ll lose the uncooked information decision. If you happen to want a replica of the uncooked information, create one other assortment with out rollups. If you wish to keep away from double storage, you possibly can set a retention coverage whenever you create a set.

Rockset’s SQL-based transformations and rollups assist you to carry out information transformation that improves question efficiency and reduces storage index measurement. The ultimate information transformation is what’s endured within the Rockset assortment. It’s necessary to notice that real-time rollups will repeatedly run on incoming information. By way of out-of-order arrivals, Rockset will course of them and replace the required information precisely as if these occasions really arrived in-order and on-time. Lastly, Rockset ensures exactly-once semantics for streaming sources, like Kafka and Kinesis.

You’ll be able to catch the replay of Tyler’s Workplace Hours session on the Rockset Group. You probably have extra questions, please discover Tyler and Nadine within the Rockset Group.

Embedded content material: https://youtu.be/dUrHqoVKC34

Assets:

Rockset is the main real-time analytics platform constructed for the cloud, delivering quick analytics on real-time information with shocking effectivity. Be taught extra at rockset.com.

[ad_2]