[ad_1]

|

Hearken to this text |

Boston Dynamics has turned its Spot quadruped, usually used for inspections, right into a robotic tour information. The corporate built-in the robotic with ChatGPT and different AI fashions as a proof of idea for the potential robotics functions of foundational fashions.

Within the final yr, we’ve seen enormous advances within the skills of Generative AI, and far of these advances have been fueled by the rise of huge Basis Fashions (FMs). FMs are giant AI programs which are skilled on an enormous dataset.

These FMs usually have hundreds of thousands of billions of parameters and had been skilled by scraping uncooked information from the general public . All of this information offers them the flexibility to develop Emergent Behaviors, or the flexibility to carry out duties outdoors of what they had been immediately skilled on, permitting them to be tailored for quite a lot of functions and act as a basis for different algorithms.

The Boston Dynamics staff spent the summer season placing collectively some proof-of-concept demos utilizing FMs for robotic functions. The staff then expanded on these demos throughout an inner hackathon. The corporate was significantly desirous about a demo of Spot making selections in real-time primarily based on the output of FMs.

Giant language fashions (LLMs), like ChatGPT, are principally very succesful autocomplete algorithms, with the flexibility to absorb a stream of textual content and predict the subsequent little bit of textual content. The Boston Dynamics staff was desirous about LLMs’ potential to roleplay, replicate tradition and nuance, kind plans, and preserve coherence over time. The staff was additionally impressed by not too long ago launched Visible Query Answering (VQA) fashions that may caption photos and reply easy questions on them.

A robotic tour information appeared like the proper demo to check these ideas. The robotic would stroll round, take a look at objects within the surroundings, after which use a VQA or captioning mannequin to explain them. The robotic would additionally use an LLM to elaborate on these descriptions, reply questions from the tour viewers, and plan what actions to take subsequent.

On this situation, the LLM acts as an improv actor, in accordance with the Boston Dynamics staff. The engineer gives it a broad strokes scrip and the LLM fills within the blanks on the fly. The staff wished to play into the strengths of the LLM, so that they weren’t on the lookout for a wonderfully factual tour. As a substitute, they had been on the lookout for leisure, interactivity, and nuance.

Submit a session summary now to be an occasion speaker. Submission Deadline: December 15, 2023

Submit a session summary now to be an occasion speaker. Submission Deadline: December 15, 2023

Turning Spot right into a tour information

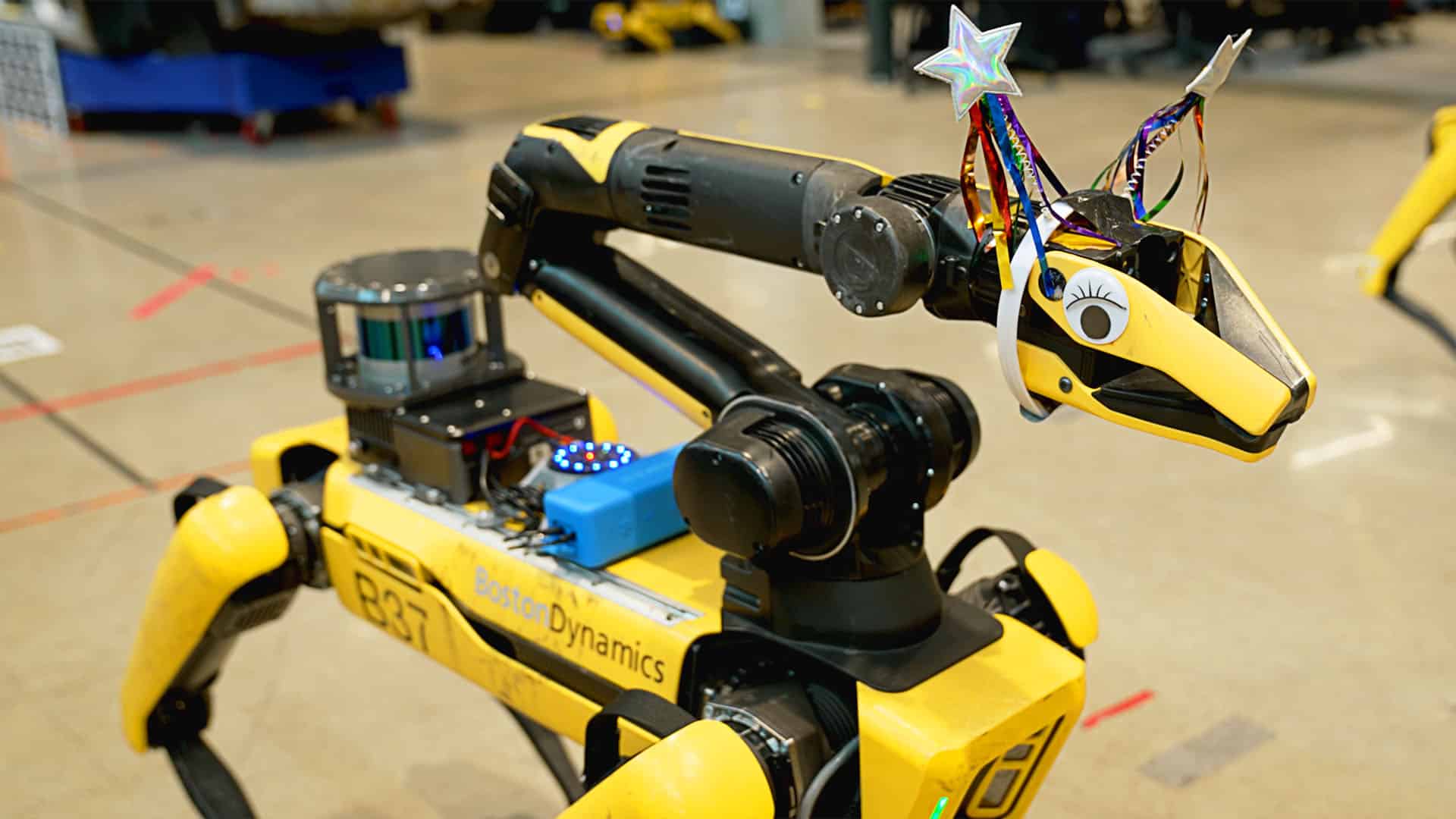

The {hardware} setup for the Spot tour information. 1. Spot EAP 2; 2. Reseaker V2; 3. Bluetooth Speaker; 4. Spot Arm and gripper digital camera. | Supply: Boston Dynamics

The demo that the staff deliberate required Spot to have the ability to converse to a gaggle and listen to questions and prompts from them. Boston Dynamics 3D printed a vibration-resistant mount for a Respeaker V2 speaker. They hooked up this to Spot’s EAP 2 payload utilizing a USB.

Spot is managed utilizing an offboard pc, both a desktop PC or a laptop computer, which makes use of Spot’s SDK to speak. The staff added a easy Spot SDK service to speak audio with the EAP 2 payload.

Now that Spot had the flexibility to deal with audio, the staff wanted to present it dialog abilities. They began with OpenAI’s ChaptGPT API on gpt-3.5, after which upgraded to gpt-4 when it grew to become accessible. Moreover, the staff did checks on smaller open-source LLMs.

The staff took inspiration from analysis at Microsoft and prompted GPT by making it seem as if it was writing the subsequent line in a Python script. They then offered English documentation to the LLM within the type of feedback and evaluated the output of the LLM as if it had been Python code.

The Boston Dynamics staff additionally gave the LLM entry to its SDK, a map of the tour web site with 1-line descriptions of every location, and the flexibility to say phrases or ask questions. They did this by integrating a VQA and speech-to-text software program.

They fed the robotic’s gripper digital camera and entrance physique digital camera into BLIP-2, and ran it in both visible query answering mode or picture captioning mode. This runs about as soon as a second, and the outcomes are fed immediately into the immediate.

To provide Spot the flexibility to listen to, the staff fed microphone information in chunks to OpenAI’s whisper to transform it into English textual content. Spot waits for a wake-up phrase, like “Hey, Spot” earlier than placing that textual content into the immediate, and it suppresses audio when it its talking itself.

As a result of ChatGPT generates text-based responses, the staff wanted to run these via a text-to-speech instrument so the robotic may reply to the viewers. The staff tried plenty of off-the-shelf text-to-speech strategies, however they settled on utilizing the cloud service ElevenLabs. To assist cut back latency, additionally they streamed the textual content to the platform as “phrases” in parallel after which performed again the generated audio.

The staff additionally wished Spot to have extra natural-looking physique language. So that they used a function within the Spot 3.3 replace that enables the robotic to detect and observe shifting objects to guess the place the closest individual was, after which had the robotic flip its arm towards that individual.

Utilizing a lowpass filter on the generated speech, the staff was in a position to have the gripper mimic speech, type of just like the mouth of a puppet. This phantasm was enhanced when the staff added costumes or googly eyes to the gripper.

How did Spot carry out?

The staff gave Spot’s arm a hat and googly eyes to make it extra interesting. | Supply: Boston Dynamics

The staff observed new conduct rising rapidly from the robotic’s quite simple motion house. They requested the robotic, “Who’s Marc Raibert?” The robotic didn’t know the reply and informed the staff that it will go to the IT assist desk and ask, which it wasn’t programmed to do. The staff additionally requested Spot who its dad and mom had been, and it went to the place the older variations of Spot, the Spot V1 and Large Canine, had been displayed within the workplace.

These behaviors present the facility of statistical affiliation between the ideas of “assist desk” and “asking a query,” and “dad and mom” with “previous.” They don’t recommend the LLM is aware or clever in a human sense, in accordance with the staff.

The LLM additionally proved to be good at staying in character, even because the staff gave it extra absurd personalities to check out.

Whereas the LLM carried out properly, it did often make issues up throughout the tour. For instance, it saved telling the staff that Stretch, Boston Dynamics’ logistics robotic, is for yoga.

Transferring ahead, the staff plans to proceed exploring the intersection of synthetic intelligence and robotics. To them, robotics gives a great way to “floor” giant basis fashions in the actual world. In the meantime, these fashions additionally assist present cultural context, basic commonsense data, and suppleness that might be helpful for a lot of robotic duties.

[ad_2]